Data sovereignty and access management

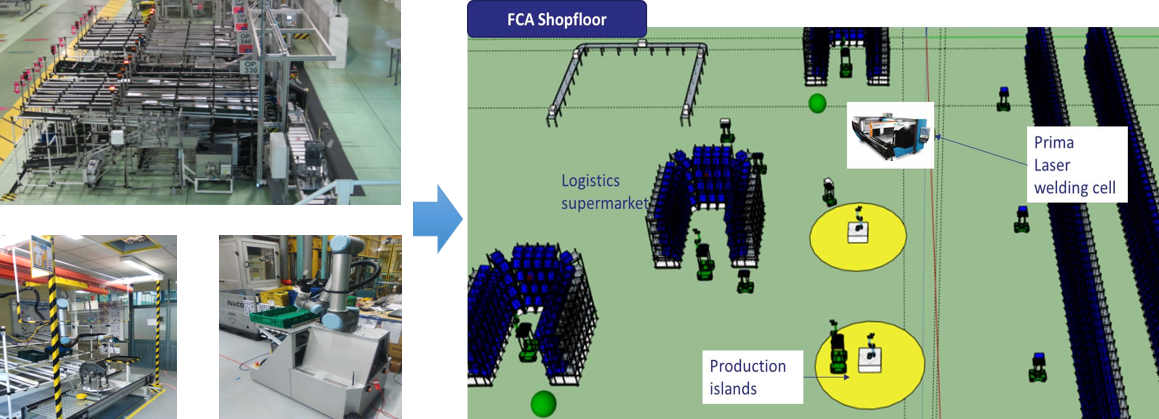

CRF autonomous assembly line factory 4.0

Data Ingestion Rate

Role-based Users

Data Storage

Security Protocols

Data-Driven Digital Process Challenges

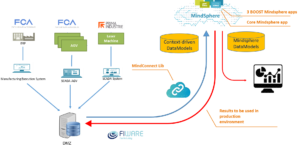

Flexibility and scalability are key features for data acquisition in the manufacturing industry and they are mandatory for a platform for Big Data analysis and collection. Such a platform has to be accessible by a great number of data sources within the plant by means of connectors and connectivity devices, and provide security accessing the data.

Flexibility and scalability are key features for data acquisition in the manufacturing industry and they are mandatory for a platform for Big Data analysis and collection. Such a platform has to be accessible by a great number of data sources within the plant by means of connectors and connectivity devices, and provide security accessing the data.

Big Data Business Process Value

The FCA autonomous assembly line trial considers as key figures for the data sovereignty and access management the following elements:

Data Ingestion Rate: The Big Data platform is aimed at the collection of huge amounts of data coming from the industrial plant. Ingestion rate determines the quantity of data that can be uploaded reliably to the platform within a specific amount of time.

Number of Authorized Users: In order to access the platform, the Boost 4.0 reference architecture defines Data Sovereignty requirements in terms of roles and authorizations provided to its users. The platform can host a limited number of users depending on its infrastructure.

Data Storage: Big Data applications rely on databases and resources to store and collect Big Data. Data storage is expressed in terms of memory that is required to host the collected data.

Security Protocols Implementation: Even though an index or indicator cannot be defined to quantify the security level, it is important to highlight the standards and rules that certify the security measures that the software is provided with. Software and infrastructures that are intended to interact with proprietary data need to guarantee security in terms of privacy and business requirements. In order to achieve this feature, the architecture must comply with standards and protocols which have been developed and validated by regulatory entities.

Large Scale Trial Performance Results

For the trial, a MindSphere IoT Value Plan has been employed for the data acquisition and to connect the assets to the cloud. The platform complies with several of the standards and protocols for data security and sovereignty. For the beginning of the pilot, the basic quota of the data ingestion rate (2kB/s), storage (50 GB) and users (10) were used, but after the scale-up phase of the project all these parameters needed to be upgraded to cope with the requirements of the experimentation. These aspects have increased up to 10kB/s, 200GB and 20 users as the amount of data and contributors scaled-up accordingly. The IT platform allowed upgrade of this parameters immediately to provide scalability and flexibility, along with security and access management.

Observations & Lessons Learned

Estimating a priori the amount of data that is going to be taken into account is not an easy task, since the data sources and the stream of data could vary a lot as new requirements occur and project evolves. Therefore, the Big Data platform needs to be rapidly scalable to be able to deal with unplanned sources. In addition, as different actors have different roles on these data, the capability of the platform to manage permissions to these users, based on their tasks and accessibility to the data, has proven to be an important feature as well as a requirement introduced by the EIDS reference architecture for data sovereignty.

In conclusion, most of the effort has been spent to provide the project the right amount of storage and ingestion rate for data acquisition and to handle the data sovereignty in terms of users with specific rights and permissions over the data.

CRF Melfi Campus |Melfi, Italy

Prima Industrie Advanced Laser Centre | Collegno, Italy

Pilot Partners

Standards used

- International Data Spaces Association

Big Data Platforms & Tools

- Mindsphere

- Fiware

Big Data Characterization

Data Volume

300GB

Data Velocity

10 kB/s

Data types

-

Time series

-

Events

-

Alarms

-

Machine states

Number of sources

- Machine configuration

- Machine conditioning

- Operational data

- Production scheduling

- Component variants information

- Pilot area characteristics

Data data

No

Implementation Assessment

![]()

![]()

![]()

Technical feasibility

![]()

![]()

![]()

Economic feasibility

![]()

![]()

![]()

Replication potential